|

Visible and Infrared Imaging, Complementary Tools for Engineering Analysis

Photons are generated and emitted by matter. A photon travels away from its source at the speed of light until it encounters other matter. Photons emitted as a result of thermal agitation in a solid, liquid, or very gas dense span the entire electromagnetic spectrum of wavelengths. For this thermal emission, the photon emission rate and the distribution of emitted photons as a function of wavelength depend on the temperature of the matter.

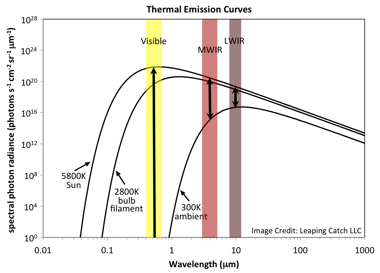

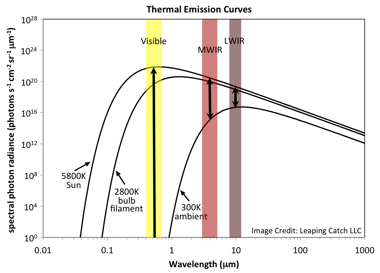

The plot at right shows ideal thermal emission curves for objects at three different temperatures - 300K (ambient), 2800K (incandescent bulb filament), and 5800K (Sun's surface). As temperature increases, the rate at which photons are emitted increases for photons of all wavelengths (the overall curve gets higher) and the peak emission rate shifts to shorter wavelengths. This relationship between the thermal emission curve and source temperature has implications for imagery acquired by sensors sensitive to different spectral regions. To aid in examining those implications, spectral regions of some commonly used sensors are highlighted. The visible region and two infrared regions, MWIR and LWIR, are shown. The plot at right shows ideal thermal emission curves for objects at three different temperatures - 300K (ambient), 2800K (incandescent bulb filament), and 5800K (Sun's surface). As temperature increases, the rate at which photons are emitted increases for photons of all wavelengths (the overall curve gets higher) and the peak emission rate shifts to shorter wavelengths. This relationship between the thermal emission curve and source temperature has implications for imagery acquired by sensors sensitive to different spectral regions. To aid in examining those implications, spectral regions of some commonly used sensors are highlighted. The visible region and two infrared regions, MWIR and LWIR, are shown.

Notice the enormous gap in the visible band between the curve for the sun and the curve for an ambient temperature object. A camera sensitive to visible wavelengths can readily detect the sun's visible photons. Indeed, to prevent overexposure when imaging the sun, the rate at which visible photons reach the sensor's surface must be significantly curtailed by use of a filter and/or an extremely short exposure time. This is true despite the photon concentration having been diluted in travelling the enormous distance to the earth and further diminished by scattering in the earth's atmosphere. On the other hand, an ambient temperature object emits visible photons at such a low rate as to be practically undetectable by a visible sensor. When you turn the lights off in an isolated interior room, the objects in the room disappear from view and are not detectable by a visible sensor.

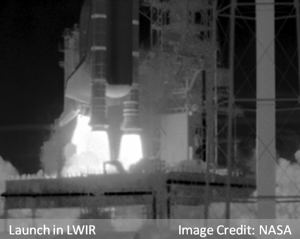

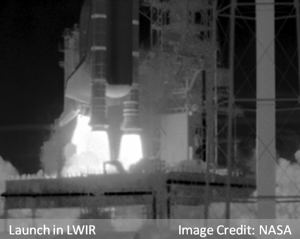

The situation is quite different at infrared wavelengths. Consider the long-wavelength infrared (LWIR) region of the spectrum. The sun emits photons at a far lower rate in the LWIR region than in the visible region. The opposite is true for an ambient temperature object. Taken together, there is a much narrower gap between the thermal emission curves for the sun and an ambient temperature object in the LWIR region than in the visible region. Of the three spectral regions highlighted, the gap between high and low temperature thermal emission curves is narrowest in the LWIR region. While the gap is significantly lower in the mid-wavelength infrared (MWIR) region than in the visible region, the gap in the MWIR region is nevertheless wider than in the LWIR region. Thus, the range of temperatures for which objects can be satisfactorily imaged above the sensor's noise floor and below its overexposure limit is wider for a LWIR sensor than for a MWIR sensor. It is for this reason that infrared imagery of Shuttle launches is acquired using LWIR sensors instead of MWIR sensors. The presence of the extremely hot plume in a Shuttle launch scene results in MWIR imagery being severely overexposed when trying to simultaneously image the ambient temperature vehicle body.

The situation is quite different at infrared wavelengths. Consider the long-wavelength infrared (LWIR) region of the spectrum. The sun emits photons at a far lower rate in the LWIR region than in the visible region. The opposite is true for an ambient temperature object. Taken together, there is a much narrower gap between the thermal emission curves for the sun and an ambient temperature object in the LWIR region than in the visible region. Of the three spectral regions highlighted, the gap between high and low temperature thermal emission curves is narrowest in the LWIR region. While the gap is significantly lower in the mid-wavelength infrared (MWIR) region than in the visible region, the gap in the MWIR region is nevertheless wider than in the LWIR region. Thus, the range of temperatures for which objects can be satisfactorily imaged above the sensor's noise floor and below its overexposure limit is wider for a LWIR sensor than for a MWIR sensor. It is for this reason that infrared imagery of Shuttle launches is acquired using LWIR sensors instead of MWIR sensors. The presence of the extremely hot plume in a Shuttle launch scene results in MWIR imagery being severely overexposed when trying to simultaneously image the ambient temperature vehicle body.

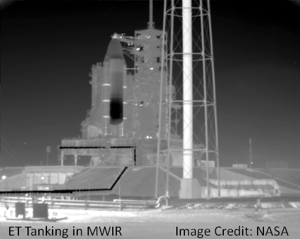

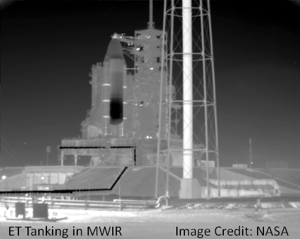

For the same reason that a LWIR camera is better suited than a MWIR camera for imaging a Shuttle launch, the MWIR camera is better suited for imaging an ET Tanking operation. The MWIR camera accommodates a narrower range of temperatures between its noise floor and overexposure limit than a LWIR camera. Therefore, the smallest measureable brightness variation across a MWIR image will represent a smaller temperature difference than the smallest variation in a LWIR image. In addition, MWIR sensors are available with larger array sizes than are available for LWIR sensors. Hence, MWIR imagery provides finer spatial resolution and finer temperature detail than does LWIR imagery. MWIR cameras are well-suited for activities like an ET Tanking operation in which an extreme range of temperatures is not involved. For the same reason that a LWIR camera is better suited than a MWIR camera for imaging a Shuttle launch, the MWIR camera is better suited for imaging an ET Tanking operation. The MWIR camera accommodates a narrower range of temperatures between its noise floor and overexposure limit than a LWIR camera. Therefore, the smallest measureable brightness variation across a MWIR image will represent a smaller temperature difference than the smallest variation in a LWIR image. In addition, MWIR sensors are available with larger array sizes than are available for LWIR sensors. Hence, MWIR imagery provides finer spatial resolution and finer temperature detail than does LWIR imagery. MWIR cameras are well-suited for activities like an ET Tanking operation in which an extreme range of temperatures is not involved.

In general, the hotter an object is, the brighter it will appear in an infrared image. So, an infrared image provides information about the temperatures of the objects in the scene. This is also true for visible imagery when the objects were imaged via the thermal radiation they emitted. The italicized caveat is important. For example, when a visible sensor images a star field, the photons detected are those emitted by the stars. If the stars are part of a star cluster whose members are all at approximately the same distance, the brighter cluster members in the image are hotter than the dimmer cluster members. However, for the type of scene more commonly imaged by a visible camera, temperature information is not available from the imagery. This is because, for typical subject matter, the visible photons that result in an object's image do not originate with the object. Instead, the object's image results from photons that were emitted by a much higher temperature source (the sun, for example) that reflected off the object's surface and onto the visible sensor.

In general, the hotter an object is, the brighter it will appear in an infrared image. So, an infrared image provides information about the temperatures of the objects in the scene. This is also true for visible imagery when the objects were imaged via the thermal radiation they emitted. The italicized caveat is important. For example, when a visible sensor images a star field, the photons detected are those emitted by the stars. If the stars are part of a star cluster whose members are all at approximately the same distance, the brighter cluster members in the image are hotter than the dimmer cluster members. However, for the type of scene more commonly imaged by a visible camera, temperature information is not available from the imagery. This is because, for typical subject matter, the visible photons that result in an object's image do not originate with the object. Instead, the object's image results from photons that were emitted by a much higher temperature source (the sun, for example) that reflected off the object's surface and onto the visible sensor.

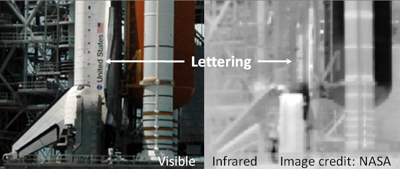

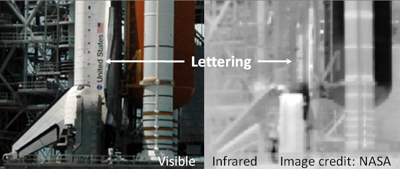

This brings up the topic of the interaction of photons with matter. When a photon encounters an object, there are three possible outcomes. The photon can travel through the object (transmission), be absorbed by the object (absorption), or reflect off the object's surface (reflection). Which of these possibilities takes place for a given photon depends on the properties of the material making up the object and the photon's characteristic wavelength. Consider the case of the lettering painted on the side of the Orbiter. When illuminated by the sun, the lettering reflects fewer visible photons and is therefore much darker than its surroundings in a visible image. Reflecting fewer photons implies that the lettering is absorbing more photons than its surroundings. This causes the lettering to warm up. As a result, the lettering emits more photons than its surroundings and appears brighter than its surroundings in an infrared image. This brings up the topic of the interaction of photons with matter. When a photon encounters an object, there are three possible outcomes. The photon can travel through the object (transmission), be absorbed by the object (absorption), or reflect off the object's surface (reflection). Which of these possibilities takes place for a given photon depends on the properties of the material making up the object and the photon's characteristic wavelength. Consider the case of the lettering painted on the side of the Orbiter. When illuminated by the sun, the lettering reflects fewer visible photons and is therefore much darker than its surroundings in a visible image. Reflecting fewer photons implies that the lettering is absorbing more photons than its surroundings. This causes the lettering to warm up. As a result, the lettering emits more photons than its surroundings and appears brighter than its surroundings in an infrared image.

The wavelengths of the reflected photons are what give an object its color in the visible part of the spectrum. For example, the Space Shuttle's External Tank (ET) appears orange in color because it absorbs photons of all wavelengths except those in the orange spectral region. Those "orange photons" that originated with the sun are reflected from the ET's surface toward the sensor. The paint on the SRB reflects photons of all wavelengths equally and thus appears white. The lettering on the Orbiter's side appears black because that paint absorbs all visible photons incident on it. Color is an important tool used by analysts to help identify different types of debris. A visible sensor that provides color information is actually composed of three different sensor sub-types, each sensitive to a different range of visible wavelengths. At this time, the majority of infrared sensors are sensitive to only one relatively broad range of wavelengths and thus do not provide "color" information. The wavelengths of the reflected photons are what give an object its color in the visible part of the spectrum. For example, the Space Shuttle's External Tank (ET) appears orange in color because it absorbs photons of all wavelengths except those in the orange spectral region. Those "orange photons" that originated with the sun are reflected from the ET's surface toward the sensor. The paint on the SRB reflects photons of all wavelengths equally and thus appears white. The lettering on the Orbiter's side appears black because that paint absorbs all visible photons incident on it. Color is an important tool used by analysts to help identify different types of debris. A visible sensor that provides color information is actually composed of three different sensor sub-types, each sensitive to a different range of visible wavelengths. At this time, the majority of infrared sensors are sensitive to only one relatively broad range of wavelengths and thus do not provide "color" information.

The choice of visible or infrared camera depends on the purpose to be served by the imagery acquired. Visible cameras provide imagery having superior spatial and temporal resolution in comparison to infrared cameras. The color information available in visible imagery is an important identification tool that is not available from infrared imagery. Analysis of visible imagery is relatively straightforward given the lifetime of practice analyzing visible imagery as part of everyday human experience. Working at infrared wavelengths has its own advantages. Because an infrared camera detects an object's thermal emission directly, an external source of photons is not necessary. An infrared camera can "see in the dark" and can thus be a valuable tool for imaging features in shadow as well as during nighttime launches and landings. Infrared imagery provides temperature information unavailable from visible imagery. This temperature information can also be an important identification tool. And because an infrared camera detects thermal emission, it offers the potential to detect the site of a debris strike in which the kinetic energy of the debris is converted into relatively long-lived thermal energy at the impact site.

Visible and infrared cameras both provide valuable data to the analyst. The two types of cameras complement each other with visible cameras providing information not available from infrared cameras and vice versa. Acquiring launch imagery with both types of cameras ensures the analyst will have the appropriate tools to meet a variety of diverse needs.Top | |

The plot at right shows ideal thermal emission curves for objects at three different temperatures - 300K (ambient), 2800K (incandescent bulb filament), and 5800K (Sun's surface). As temperature increases, the rate at which photons are emitted increases for photons of all wavelengths (the overall curve gets higher) and the peak emission rate shifts to shorter wavelengths. This relationship between the thermal emission curve and source temperature has implications for imagery acquired by sensors sensitive to different spectral regions. To aid in examining those implications, spectral regions of some commonly used sensors are highlighted. The visible region and two infrared regions, MWIR and LWIR, are shown.

The plot at right shows ideal thermal emission curves for objects at three different temperatures - 300K (ambient), 2800K (incandescent bulb filament), and 5800K (Sun's surface). As temperature increases, the rate at which photons are emitted increases for photons of all wavelengths (the overall curve gets higher) and the peak emission rate shifts to shorter wavelengths. This relationship between the thermal emission curve and source temperature has implications for imagery acquired by sensors sensitive to different spectral regions. To aid in examining those implications, spectral regions of some commonly used sensors are highlighted. The visible region and two infrared regions, MWIR and LWIR, are shown. The situation is quite different at infrared wavelengths. Consider the long-wavelength infrared (LWIR) region of the spectrum. The sun emits photons at a far lower rate in the LWIR region than in the visible region. The opposite is true for an ambient temperature object. Taken together, there is a much narrower gap between the thermal emission curves for the sun and an ambient temperature object in the LWIR region than in the visible region. Of the three spectral regions highlighted, the gap between high and low temperature thermal emission curves is narrowest in the LWIR region. While the gap is significantly lower in the mid-wavelength infrared (MWIR) region than in the visible region, the gap in the MWIR region is nevertheless wider than in the LWIR region. Thus, the range of temperatures for which objects can be satisfactorily imaged above the sensor's noise floor and below its overexposure limit is wider for a LWIR sensor than for a MWIR sensor. It is for this reason that infrared imagery of Shuttle launches is acquired using LWIR sensors instead of MWIR sensors. The presence of the extremely hot plume in a Shuttle launch scene results in MWIR imagery being severely overexposed when trying to simultaneously image the ambient temperature vehicle body.

The situation is quite different at infrared wavelengths. Consider the long-wavelength infrared (LWIR) region of the spectrum. The sun emits photons at a far lower rate in the LWIR region than in the visible region. The opposite is true for an ambient temperature object. Taken together, there is a much narrower gap between the thermal emission curves for the sun and an ambient temperature object in the LWIR region than in the visible region. Of the three spectral regions highlighted, the gap between high and low temperature thermal emission curves is narrowest in the LWIR region. While the gap is significantly lower in the mid-wavelength infrared (MWIR) region than in the visible region, the gap in the MWIR region is nevertheless wider than in the LWIR region. Thus, the range of temperatures for which objects can be satisfactorily imaged above the sensor's noise floor and below its overexposure limit is wider for a LWIR sensor than for a MWIR sensor. It is for this reason that infrared imagery of Shuttle launches is acquired using LWIR sensors instead of MWIR sensors. The presence of the extremely hot plume in a Shuttle launch scene results in MWIR imagery being severely overexposed when trying to simultaneously image the ambient temperature vehicle body. For the same reason that a LWIR camera is better suited than a MWIR camera for imaging a Shuttle launch, the MWIR camera is better suited for imaging an ET Tanking operation. The MWIR camera accommodates a narrower range of temperatures between its noise floor and overexposure limit than a LWIR camera. Therefore, the smallest measureable brightness variation across a MWIR image will represent a smaller temperature difference than the smallest variation in a LWIR image. In addition, MWIR sensors are available with larger array sizes than are available for LWIR sensors. Hence, MWIR imagery provides finer spatial resolution and finer temperature detail than does LWIR imagery. MWIR cameras are well-suited for activities like an ET Tanking operation in which an extreme range of temperatures is not involved.

For the same reason that a LWIR camera is better suited than a MWIR camera for imaging a Shuttle launch, the MWIR camera is better suited for imaging an ET Tanking operation. The MWIR camera accommodates a narrower range of temperatures between its noise floor and overexposure limit than a LWIR camera. Therefore, the smallest measureable brightness variation across a MWIR image will represent a smaller temperature difference than the smallest variation in a LWIR image. In addition, MWIR sensors are available with larger array sizes than are available for LWIR sensors. Hence, MWIR imagery provides finer spatial resolution and finer temperature detail than does LWIR imagery. MWIR cameras are well-suited for activities like an ET Tanking operation in which an extreme range of temperatures is not involved. In general, the hotter an object is, the brighter it will appear in an infrared image. So, an infrared image provides information about the temperatures of the objects in the scene. This is also true for visible imagery when the objects were imaged via the thermal radiation they emitted. The italicized caveat is important. For example, when a visible sensor images a star field, the photons detected are those emitted by the stars. If the stars are part of a star cluster whose members are all at approximately the same distance, the brighter cluster members in the image are hotter than the dimmer cluster members. However, for the type of scene more commonly imaged by a visible camera, temperature information is not available from the imagery. This is because, for typical subject matter, the visible photons that result in an object's image do not originate with the object. Instead, the object's image results from photons that were emitted by a much higher temperature source (the sun, for example) that reflected off the object's surface and onto the visible sensor.

In general, the hotter an object is, the brighter it will appear in an infrared image. So, an infrared image provides information about the temperatures of the objects in the scene. This is also true for visible imagery when the objects were imaged via the thermal radiation they emitted. The italicized caveat is important. For example, when a visible sensor images a star field, the photons detected are those emitted by the stars. If the stars are part of a star cluster whose members are all at approximately the same distance, the brighter cluster members in the image are hotter than the dimmer cluster members. However, for the type of scene more commonly imaged by a visible camera, temperature information is not available from the imagery. This is because, for typical subject matter, the visible photons that result in an object's image do not originate with the object. Instead, the object's image results from photons that were emitted by a much higher temperature source (the sun, for example) that reflected off the object's surface and onto the visible sensor.

This brings up the topic of the interaction of photons with matter. When a photon encounters an object, there are three possible outcomes. The photon can travel through the object (transmission), be absorbed by the object (absorption), or reflect off the object's surface (reflection). Which of these possibilities takes place for a given photon depends on the properties of the material making up the object and the photon's characteristic wavelength. Consider the case of the lettering painted on the side of the Orbiter. When illuminated by the sun, the lettering reflects fewer visible photons and is therefore much darker than its surroundings in a visible image. Reflecting fewer photons implies that the lettering is absorbing more photons than its surroundings. This causes the lettering to warm up. As a result, the lettering emits more photons than its surroundings and appears brighter than its surroundings in an infrared image.

This brings up the topic of the interaction of photons with matter. When a photon encounters an object, there are three possible outcomes. The photon can travel through the object (transmission), be absorbed by the object (absorption), or reflect off the object's surface (reflection). Which of these possibilities takes place for a given photon depends on the properties of the material making up the object and the photon's characteristic wavelength. Consider the case of the lettering painted on the side of the Orbiter. When illuminated by the sun, the lettering reflects fewer visible photons and is therefore much darker than its surroundings in a visible image. Reflecting fewer photons implies that the lettering is absorbing more photons than its surroundings. This causes the lettering to warm up. As a result, the lettering emits more photons than its surroundings and appears brighter than its surroundings in an infrared image.

The wavelengths of the reflected photons are what give an object its color in the visible part of the spectrum. For example, the Space Shuttle's External Tank (ET) appears orange in color because it absorbs photons of all wavelengths except those in the orange spectral region. Those "orange photons" that originated with the sun are reflected from the ET's surface toward the sensor. The paint on the SRB reflects photons of all wavelengths equally and thus appears white. The lettering on the Orbiter's side appears black because that paint absorbs all visible photons incident on it. Color is an important tool used by analysts to help identify different types of debris. A visible sensor that provides color information is actually composed of three different sensor sub-types, each sensitive to a different range of visible wavelengths. At this time, the majority of infrared sensors are sensitive to only one relatively broad range of wavelengths and thus do not provide "color" information.

The wavelengths of the reflected photons are what give an object its color in the visible part of the spectrum. For example, the Space Shuttle's External Tank (ET) appears orange in color because it absorbs photons of all wavelengths except those in the orange spectral region. Those "orange photons" that originated with the sun are reflected from the ET's surface toward the sensor. The paint on the SRB reflects photons of all wavelengths equally and thus appears white. The lettering on the Orbiter's side appears black because that paint absorbs all visible photons incident on it. Color is an important tool used by analysts to help identify different types of debris. A visible sensor that provides color information is actually composed of three different sensor sub-types, each sensitive to a different range of visible wavelengths. At this time, the majority of infrared sensors are sensitive to only one relatively broad range of wavelengths and thus do not provide "color" information.